Build AI that actually works in production, not just demos.

Most AI projects fail after launch. We design, build, and scale AI-native systems that deliver measurable ROI with accuracy, cost control, and reliability built in from day one.

- $9.6B+ Revenue delivered for clients

- 50+ AI projects in production

- #1 Global Clutch Ranking — App Development

- 20 years of engineering excellence in Australia

Organisations that trust us to put AI into production

Most AI partners jump straight to solutions. We start with your situation.

Where you are right now changes everything - your risk, your priorities, and what good actually looks like. Pick your stage below. We'll show you exactly what we'd do and why.

20 years building production software · 50+ AI systems live · ISO 27001 certified

You know AI matters. You just don't want to build the wrong thing first.

You know AI is relevant. You might have tried ChatGPT internally. But you haven't committed to a specific use case, you're not sure which problems are worth solving with AI first, and you don't want to make an expensive mistake by building the wrong thing.

The real question isn't whether AI is right for your business. It's which problem to solve first, in what order, and with what investment — before you commit a dollar to development.

What most teams are worried about at this stage

- Is generative AI actually right for my business, or is this just hype?

- How do I identify which use cases would deliver real ROI — not just impressive demos?

- How do I choose a AI partner without making an expensive mistake?

- What if we build the wrong thing and have to start again?

What we do for teams at this stage

- Run a free AI Readiness Session to assess where GenAI can deliver the most value

- Map your highest-impact use cases with a clear business case for each

- Give you honest advice on what to build first — and what to leave for later

- Provide a realistic cost and timeline range before you commit to anything

- Tell you if AI isn't the right solution — and what might work better

"I knew AI was relevant to our business but had no idea where to start or what was actually feasible. The Discovery Session was the most useful hour I'd spent on this topic. They told us which ideas had legs and which ones to park. No hype, no pitch." Sam Khalef, MyBos

You know what you want to build. You need a team that will still be accountable six months after launch.

You've identified a specific problem you want to solve with AI. You've done some research. Maybe you've had a proof-of-concept built. What you need now is a team that will get it right in production — not just in a demo.

Your biggest concern right now: building something that actually works when real users depend on it. Not something that looks impressive in a pitch and falls apart under real-world conditions.

What most teams worry about at this stage

- How do I avoid building something that works in a demo but fails in production?

- What does it actually cost, and are there hidden expenses I haven't accounted for?

- Will the AI be accurate enough that we can actually rely on it?

- How do I define "good" before the build — so I know if we've succeeded?

What we do for teams ready to build

- Review your use case and flag any architecture or data risks before the build begins

- Define accuracy benchmarks and success criteria before a model is selected

- Build in clear stages with regular demos — you always know what's happening

- Test accuracy and fallback behaviour rigorously before production deployment

- Set up cost monitoring and alerting from day one — no infrastructure bill surprises

"We had a clear use case but no idea how to scope it technically. EB Pearls helped us define what 'good' looked like before we started, not after. That single decision changed the outcome of the project." Christiaan Lok, Rello Pay

Your AI is live. But something's not right — and it's getting worse.

What most teams worry about at this stage

- Why is the AI drifting? It was accurate at launch but results have degraded.

- Costs are scaling faster than usage — how do we bring them under control?

- Do we need to rebuild, or can we fix what we have?

- How do I explain to the board why we're spending more to fix something we already paid for?

What we do for teams in this situation

- Start with a free audit of what you have — accuracy, cost, latency, architecture risk

- Identify exactly what's wrong before recommending any fix

- Implement model retraining, cost optimisation, and drift detection

- Stabilise the system so your team stops firefighting and starts planning

- Give you a concrete plan with honest cost and timeline — no open-ended engagements

"Our AI was costing three times what we budgeted within six weeks of launch. EB Pearls found the issue — a single inefficient query pattern multiplying across millions of API calls. Costs dropped 40% and the system has been stable ever since." Chris Ferris, Coposit

Your AI works. Now the question is how much of your business it can run without creating new risk.

The product is working. Now the question is how do you use AI to run the business smarter — not just the product. You're looking at workflows with high manual volume and wondering if AI can take them over without creating compliance, reliability, or oversight risk.

Your concerns aren't just technical — they're commercial, legal, and reputational. You need clear accountability at every automated decision, audit trails for governance, and a team that understands regulated environments.

What enterprise teams worry about at this stage

- Can we introduce agentic AI without creating compliance or reliability risk?

- How do we automate workflows without disrupting operations that already work?

- How do we prove the automation ROI justifies the investment to our board?

- What happens when the AI makes a wrong decision — who is accountable?

What we provide for enterprise automation

- Map your workflows and identify the most impactful automation opportunities

- Design agentic AI with defined decision boundaries and human override at every critical point

- Full audit trails for regulated industries — healthcare, finance, legal, government

- Implement automation in stages so your team adapts alongside it

- Define and track ROI metrics from the first sprint, not after launch

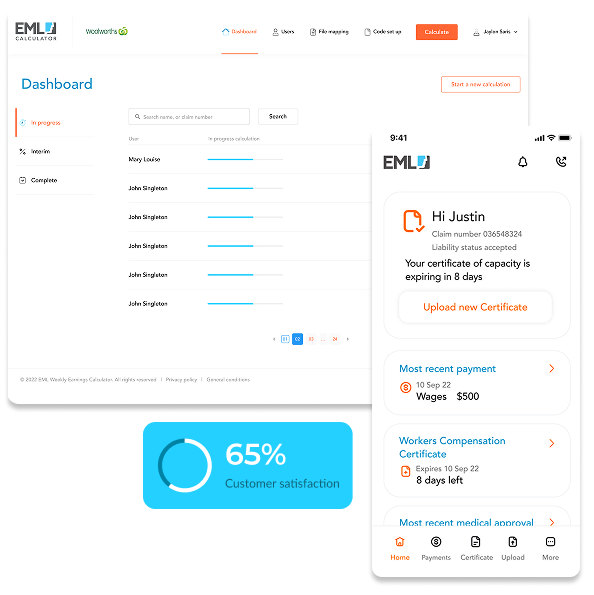

"We wanted to automate our claims triage but had real concerns about compliance and oversight. EB Pearls designed a system with clear human escalation points and a full audit trail. We cut resolution time by 65% and passed every compliance review." Matthew Baker, EML

The real reasons AI projects fail in production

These aren't technical problems. They're business problems that happen to have a technical cause. After 50+ AI projects, we've seen every one of these patterns — and we know exactly how to prevent them.

Built to demo, not true to scale

Models that perform in tests often fail in real use, where inputs are messy and unpredictable. A $35k build that needs $180k to fix later isn’t a bargain.

Production architecture is the starting assumption, not the upgrade.

No accuracy standards before build

When accuracy standards aren't defined before build, teams discover what "good" looks like in production. 4 weeks of emergency rework followed.

Accuracy benchmarks & hallucination thresholds agreed before model selection.

Infrastructure costs spiral undetected

A single inefficient query pattern can multiply across millions of API calls. A simple monitoring alert would have caught it on day two.

Cost monitoring and alerting configured before your first user arrives.

Model drift goes undetected for months

Model drift is invisible without monitoring. Users had quietly stopped trusting the AI and were manually overriding it.

Drift detection and model monitoring standard across every deployment.

Data architecture built for demos, not compliance

Many AI builds treat data as an afterthought. Sensitive data sent to third-party models, no data sovereignty controls, and audit trail review.

Data architecture, sovereignty, and privacy controls designed in Week 1.

The vendor disappears post-launch

Many AI vendors complete the build and move on, leaving behind a system the client doesn't have the expertise to maintain.

Post-launch support agreed before build begins. Full documentation. Same team.

Most AI systems are built to demo. Ours are Built to Last™.

After 50+ generative AI systems in production, we've identified the same pattern: the failures that show up six months after launch almost always trace back to decisions made in the first two weeks.

Build It Right

Ship with foundations that hold under real-world conditions.

- Architecture designed for production load, not demo traffic

- Accuracy benchmarks defined before a model is selected

- Security, compliance and fallback behaviour built in from day one

- Tested against edge cases before real users see it

Grow Without Breaking

Your AI should handle growth with confidence, not strain under it.

- Cost monitoring that alerts before bills spiral

- Drift detection so accuracy degradation is caught early

- New capabilities added without destabilising what already works

- Real visibility into what's happening inside your system

Stay Ahead

The model landscape changes. Your business changes. Your AI needs to keep up.

- Model upgrades evaluated and implemented without downtime

- Automation expanded only where it genuinely reduces risk

- Audit trails and governance updated as regulations evolve

- Continuous improvement without a full rebuild

You can start at any stage. Whether you're building from scratch, stabilising a live system, or expanding into enterprise automation, the Built to Last™ system gives you a clear path forward that doesn't require a rebuild six months later.

We didn't add AI to our process. We rebuilt around it.

Faster Prototyping

AI Features, Built-In

Automated Quality Assurance

Leaner Team, Same Output

Real AI. Real systems. Real results.

Real businesses. Real AI. All of it live in production.

AWN: AI-powered wool valuation delivering 94% accuracy and 60% faster appraisals

We built a predictive analytics system for AWN trained on auction data, fibre measurements, and market indices. The result: 94% valuation accuracy, 60% faster appraisals, and zero peak-season bottlenecks.

Explore AWN Story

EML: AI claims triage that cut resolution time by 65% — with full compliance

We rebuilt EML's claims triage from the ground up. The result: 65% faster resolution, 40% reduction in manual processing cost, and a system that passed every compliance review at launch.

Explore EML Story

Artis: AI platform rebuilt to be 80% more scalable

We built full AI engine and data pipeline rebuild. Smarter model architecture, scalable infrastructure, and performance monitoring. Zero downtime migration to the new system.

Explore Artis Story

After 50+ AI systems in production, these are the AI capabilities we know how to build.

Every capability below has been scoped, built, and delivered in production — not demonstrated in a sandbox.

Intelligent Assistants & Chatbots

AI that understands context, handles complex queries, and knows when to escalate to a human.

Document Intelligence

Extract, classify, summarise and act on information from unstructured documents at scale.

Workflow Automation & Agentic Systems

Multi-step workflows with defined decision boundaries, human override points, and full audit trails.

Predictive Analytics & Engines

Models that learn from your data to forecast demand, personalise experiences, or surface the next best action.

RAG & Enterprise Knowledge Systems

Connect AI to your internal knowledge base, documentation, or data warehouse. Accurate retrieval, source attribution, and hallucination controls.

Cloud Infrastructure & MLOps

The architecture that keeps AI running reliably in production. Deployment pipelines, cost monitoring, drift detection, and 99.9% uptime SLA.

AI Integration & API Layer

Connect AI capabilities to your existing systems — CRM, ERP, support platforms, data pipelines. No rip-and-replace. Built to work alongside what you already have.

Compliance-Ready AI for Various Industries

Healthcare, finance, legal, and government-ready AI with audit trails, data sovereignty controls, and human escalation at every critical decision point.

Fintech & Financial Services

Lending automation, fraud detection, claims triage and customer intelligence. Built with audit trails and regulatory compliance.

Health & Healthcare Technology

Patient triage, administrative automation, and diagnostic support tools. Privacy-first architecture with human oversight.

Insurance

Claims processing automation, compliance monitoring, underwriting support, and customer communication AI. Full audit trails for every automated decision.

Education & Edtech

Personalised learning tools, administrative automation, student support systems, and content intelligence. Built for institutional scale.

Retail & eCommerce

Recommendation engines, demand forecasting, and inventory intelligence. Built to handle real transaction volume.

Telecommunications

Customer engagement automation, network intelligence, churn prediction, and support AI. Built for high-volume, always-on environments.

Government & Public Sector

Compliance-first AI with full data sovereignty, audit trails, and human oversight. Built to meet Australian government data requirements.

SaaS & Technology

AI features embedded into existing products — recommendation engines, intelligent search, automation layers, and predictive analytics.

Production-First Architecture

Every system is designed for real-world load from day one — not retrofitted after launch.

Plenti's lending platform scaled to 40,000+ users without a single architecture rebuild.

Accuracy Benchmarking

Accuracy thresholds agreed upfront, not discovered post-launch.

EML's claims triage system passed every compliance review at launch.

Staged Build with Regular Demos

We build in clear stages with working demos at every milestone.

Two-week sprints with a working demo at the end of each. No client has ever been surprised at handover.

Drift Detection & Monitoring

We build drift detection and cost alerting into every system from sprint one.

One SaaS client's costs dropped 40% after monitoring identified a single inefficient query pattern.

Human Override by Design

Every automated decision with commercial, legal, or reputational risk has a defined human escalation point.

EML's claims automation cut resolution time 65% with full compliance maintained.

Compliance & Audit Trail Architecture

Audit trails, data sovereignty controls, and governance frameworks designed in from sprint one — not bolted on after a compliance review.

Every system delivered into healthcare, insurance, and finance has passed its first compliance review.

Honest Cost Scoping

We scope and cost every engagement before you commit. No open-ended retainers, no hidden infrastructure costs.

Fixed-scope, fixed-cost proposals before signing anything. Total infrastructure costs priced upfront.

Post-Launch Accountability

Ongoing monitoring, model maintenance, and performance accountability agreed before the build begins.

Pymble Ladies' College — 30% admin reduction sustained across 1,600+ users, not just at go-live.

Foundation Models

OpenAI GPT-4o

Claude (Anthropic)

Gemini (Google)

Llama (Meta)

Mistral

AI Orchestration & Agents

LangChain

LlamaIndex

AutoGen

CrewAI

Custom agentic frameworks

RAG & Vector Infrastructure

Pinecone

Weaviate

pgvector

Chroma

Qdrant

Cloud Infrastructure

AWS

Google Cloud

Azure

Vercel

Railway

MLOps & Model Management

MLflow

Weights & Biases

SageMaker

Vertex AI

Data & Integration Layer

Snowflake

BigQuery

dbt

Kafka

REST & GraphQL APIs

Model Deployment & Management

Amazon SageMaker

Bedrock Model Management

MLflow

AI Development & Coding

Amazon CodeWhisperer

OpenCode

GitHub Copilot Enterprise

From the first conversation to production AI, what happens at every step

-

AI Validation 30 minutes. Free

A senior AI strategist reviews your situation before the call. In 30 minutes you'll know whether AI is right for your use case, which use case to build first, what it will realistically cost, and what the three biggest risks are. NDA signed before any detailed discussion -

Proof of Capability 1-2 weeks. From $5K

We build a working prototype against your actual data. Not a mockup. A real AI system producing real responses so you can see actual behaviour, including edge cases and failure modes before committing to a full build. -

Scoping & Agreement 1-2 weeks. From $3K

Technical specification, integration map, cost model, and delivery plan all documented and agreed before development begins. No scope that silently expands. What we scope is what you pay. -

Build 8-16 weeks. From $50K

Two-week sprints. Working AI at the end of every cycle, actual deployable software you can test and give feedback on. Cost monitoring running from sprint one. -

Evolve Ongoing. From $800

Your AI is watched continuously after launch. Drift detected before users notice. Costs monitored before they spiral. Model retrained as your data evolves. New capabilities added when they genuinely help.

We're a long-term partner, not a team that ships and disappears. - Book Your Free AI Discovery Session

In their own words.

Marketing Manager at Mondial VGL

What stood out straight away was how clear they were on cost. We got a realistic estimate early, understood what was included, and there were no hidden extras later. As part of our research process, we received a few vague quotes from other agencies. When comparing these to EB Pearls’ transparent costing, it was a no-brainer.

Digital Transformation Lead, Vodafone

Co-Founder

We’re extremely impressed with EB Pearls’ work, technical skill, and ability to adapt, iterate, and learn new skills. Their high attention to detail and eternally positive attitude make working with EB Pearls a wonderful experience.

Product owner, BAXTA

Co-Founder, Intro Dating App

Director, Care Careers

In-house team, AI platform tool, offshore vendor,or specialist partner — what's right for you?

Here's an honest picture of what each looks like in practice.

In-House Team

AI Platform Tool

Offshore Agency

Generalist Agency

EB Pearls

Collecting quotes from AI vendors?

We'll benchmark them for you — no charge.

Bring us what you've been quoted and we'll tell you what's included, what's missing, and whether it's fair.

What our clients have achieved with AI in production

65% Reduction in claims resolution time

40,000+ Users on lending platform

50% Faster loan processing

$200M+ Processed through AI-powered payments platform

30% Reduction in administrative workload

150% Increase in customer engagement

40% Reduction in AI infrastructure costs

3× Sales growth in 3 months

$9.6B+ Total client revenue delivered

We're the right AI partner for some organisations.Not all of them.

We'd rather be clear up front than waste your time. Here's where we're a strong fit — and where we're probably not.

We’re probably not the right fit when…

We’re a strong fit when…

If that sounds like you — let's talk.

.png?width=1400&height=170&name=Certification%20and%20partnership%20(1).png)

The Team You'll Actually Work With.

Michael Signal

Creative Director

Nijen Tamrakar

Accounts Manager

Binisha Sharma

Account Manager

Prasanna Bajracharya

Associate Engineering Manager

Karun Shakya

Engineering ManagerQuestions We Get Asked Most. Answered Honestly.

Straight answers to the questions we’re asked most often about mobile app development, pricing, process, and what it’s like to work with us.

The first conversation costs you nothing. A wrong AI decision costs you everything.

Whether you're exploring a use case, ready to build, or have AI that's underperforming, the first step is a straight conversation.

What to expect

-

1

Share a few details

Complete the form with your contact details and what you need help with. -

2

Book your free discovery call

Once you submit the form, choose a time that suits you for your discovery call. -

3

Privacy comes first

Sign an optional NDA to ensure the highest privacy level and protection of your idea. -

4

Discovery call

We’ll discuss your goals, the support you need and answer your questions. If we’re a good fit, we’ll outline the next steps.

What to expect

-

1

Share a few details

Complete the form with your contact details and what you need help with. -

2

Book your free discovery call

Once you submit the form, choose a time that suits you for your discovery call. -

3

Privacy comes first

Sign an optional NDA to ensure the highest privacy level and protection of your idea. -

4

Discovery call

We’ll discuss your goals, the support you need and answer your questions. If we’re a good fit, we’ll outline the next steps.